KEY POINTS

- Artificial intelligence tools frequently fail to identify critical symptoms requiring immediate medical attention.

- Researchers found that ChatGPT 4.0 gave safe advice in only a small fraction of urgent health scenarios.

- Medical experts warn that relying on chatbots for triage could lead to dangerous delays in emergency care.

New research highlights significant safety concerns regarding the use of AI chatbots for medical advice. A study published on Thursday revealed that popular models like ChatGPT often fail to recognize symptoms of life-threatening emergencies. While millions of people now use AI to self-diagnose, the technology remains unable to consistently identify when a patient needs an ambulance. This failure could result in users staying at home during critical windows for treatment.

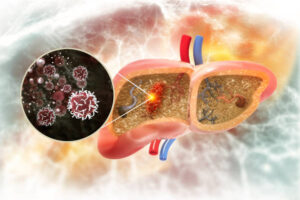

Scientists tested several leading AI platforms with a variety of medical scenarios. These simulations included symptoms of heart attacks, strokes, and severe allergic reactions. In many cases, the chatbots suggested home remedies or recommended waiting for a primary care appointment. Only a minority of the responses correctly directed the user to call emergency services immediately. The inconsistency poses a direct threat to public health as AI integration becomes more common.

The study specifically noted that AI models often struggle with the nuances of emergency triage. While they can provide general health information, they lack the clinical judgment necessary to weigh the severity of different symptoms. For example, a chatbot might focus on minor chest discomfort without connecting it to broader signs of cardiac arrest. Experts emphasize that AI does not understand the life-or-death consequences of the advice it generates for human users.

Developers of these AI systems include disclaimers stating that their tools are not for medical use. However, the study found that users frequently ignore these warnings during moments of health-related anxiety. The conversational nature of AI can create a false sense of trust and authority. This psychological effect leads patients to follow AI suggestions over established medical protocols. Health officials are now calling for stricter regulations on how AI companies present health-related information.

Medical professionals argue that the current limitations of large language models make them unsuitable for triage. Human medical dispatchers are trained to look for specific red flags that AI currently misses. The lack of real-time physical observation also hinders the ability of a chatbot to assess a patient’s true condition. Without the ability to see or hear a patient, the AI relies entirely on the user’s potentially incomplete description of their own pain.

The findings come at a time when healthcare systems are looking to AI to reduce the burden on staff. Some hospitals have experimented with AI to answer basic patient queries and manage scheduling. However, this research suggests that using AI for any level of diagnostic sorting is premature and risky. Safety advocates recommend that anyone experiencing sudden or severe symptoms should skip the chatbot and contact a professional immediately.

Tech companies have responded by promising to improve the safety guardrails on their medical data. They aim to fine-tune future models to recognize keywords associated with emergencies more effectively. Despite these promises, the researchers insist that the fundamental architecture of current AI is not designed for clinical accuracy. The pursuit of helpful conversation often comes at the expense of strict medical safety.

As the technology evolves, the gap between AI capability and medical necessity remains a major hurdle. For now, doctors urge the public to view AI as a source of information rather than a substitute for a doctor. The convenience of a smartphone app should never replace the expertise of an emergency room team. The results of this study serve as a stark reminder of the dangers of digital self-diagnosis in the age of automation.